Understanding Robots.txt: The Key to SEO Management in 2025

In the ever-evolving landscape of search engine optimization (SEO), the robots.txt file stands as a crucial yet often overlooked tool for website owners and SEO professionals alike. As outlined by Elmer Boutin in his comprehensive article, “Robots.txt and SEO: What you need to know in 2025,” mastering the robots.txt can significantly enhance a site’s visibility and performance. The Robots Exclusion Protocol (REP) has been a cornerstone since its inception in 1994, but recent updates have made it even more indispensable.

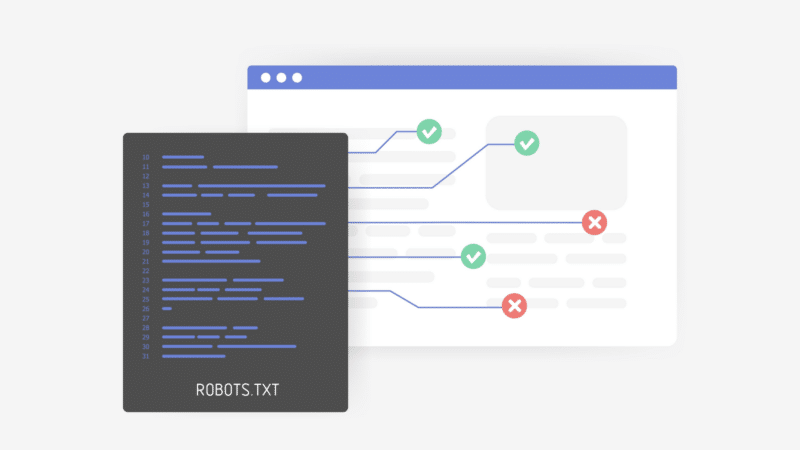

At its core, the robots.txt file informs web crawlers about which areas of a website may be accessed or should remain private. By efficiently directing crawlers to the right parts of a site, website owners can prioritize the indexing of important content and protect less critical pages from being indexed. This strategic filtering optimizes traffic flow and boosts SEO performance overall.

Creating an effective robots.txt file is not only simple but vital. The basic commands includeUser-agent, which identifies specific bots, andDisallow, which restricts access to designated sections. For instance, a well-structured command can prevent crawlers from indexing pages that do not contribute significantly to SEO, while ensuring that high-value content is prioritized. Additionally, the use of wildcards simplifies rules, allowing for broad yet flexible control over how crawlers interact with a site.

Recent updates to the robots.txt framework have introduced the ability to combine directives more efficiently. TheAllowdirective can now coexist withDisallow, enabling webmasters to fine-tune access to specific areas of their sites. Such flexibility is essential for managing complex crawling behaviors, ensuring that the most valuable content remains accessible to search engines.

Another important aspect of robots.txt management is theCrawl-delaycommand, which regulates the request frequency from web crawlers. This becomes crucial in maintaining server performance during peak crawling periods, though advanced bots often self-regulate their requests. By balancing crawler access and server load, site performance remains optimal, allowing for a better user experience.

Moreover, including a link to the site’s XML sitemap in the robots.txt file is a recommended best practice. This addition enhances indexing potential, guiding crawlers directly to structured site content. However, caution must be exercised; common pitfalls such as syntax errors and overly restrictive commands can hinder visibility. It is vital for website owners to remember that not all bots adhere to the rules outlined in robots.txt, so additional measures may be necessary for content control.

As we look toward 2025, the robots.txt file must be utilized as a powerful asset in any digital marketing strategy. The insights provided by Boutin underscore that a well-managed robots.txt can significantly impact a website’s SEO efforts. Understanding the complexities of this tool is not just beneficial but essential for professionals looking to optimize their online presence.

Given the dominating influence of short-linking techniques in digital marketing today, integrating robots.txt management with URL shorteners can amplify visibility. Custom domain short links can lead directly to optimized pages highlighted in the robots.txt file, assisting in directed traffic flow and improved user engagement. Therefore, leveraging tools like BitIgniter or LinksGPT alongside effective robots.txt practices promises to enhance the efficiency of online strategies.

Understanding and managing the robots.txt file lies at the heart of mastering SEO in the digital realm. As this tool evolves, so should the strategies of developers, marketers, and SEO specialists to maintain a competitive edge in the market.

#BitIgniter #LinksGPT #UrlExpander #UrlShortener #SEO #DigitalMarketing

For further reading, consider accessing resources provided by Google Search Central, which offers in-depth guidance on optimizing with robots.txt and enhancing overall site performance.

Mehr erfahren: Hier weiterlesen